This article originally was published on LinkedIn by our CTO on September 11th, 2018. The article has been updated and re-posted in this blog.

Intro

I attended VMworld this year in Las Vegas the week of August 27th, 2018. Like every year there is a lot more going at the conference than just VMware. Many storage vendors are present to show off their new technology and others are part of the VMware ecosystem. My focus this year was around High-Performance Computing (HPC), Big Data and Artificial Intelligence (AI). More specifically workloads around Machine Learning (ML) and Deep Learning (DL) instead of the traditional virtual workloads.

In the Corporate IT world virtualization is well understood and what the benefits are. The efficient usage and consolidation of infrastructure in IT combined with flexible management tools make virtualization an easy choice. The HPC world is a more difficult selling point as it is assumed that bare metal is the only option for maximum performance. The virtualization technology has significantly improved. The overhead associated with it is a fraction of what it used to be. The latency is now also very competitive and makes the overall virtualization picture worth looking at.

In this blog, I talk about the new vSphere SKU for high-performance workloads. First, the features of vSphere 6.7 related to hardware accelerators such as GPUs and the upcoming 6.7 Update 1. Finally, a list of the HPC/Big Data/AI sessions at VMworld that I wanted to see. If you missed VMworld this year you are in luck as VMware recorded all the sessions. The sessions can be viewed online. I provided a direct link to each session for your convenience.

HPC/Big Data

The new HPC workloads demand more flexible infrastructure, change frequently and rely on a variance in resources processing those workloads. The use of virtualization can improve the efficiency of resource usage as well as reducing the time it takes to reconfigure. The resources can be adjusted based on changing requirements. On top of that, there is the benefit of using the vSphere tools needed to manage, support and run workloads securely. Indeed, the VMware ECO system delivers a complete environment. If you have used vSphere in the past than you are already familiar with the tools.

The resulting efficiency closes the performance gap with bare metal. I some cases vSpehere delivers even faster results with comparable latency.

vSphere for HPC/Big Data

VMware has increased its focus in the last few years to the growing markets for HPC, Big Data and AI. They spend a lot of effort adding support to vSphere to accommodate those markets. It is a work in progress, but the improvements and added features are impressive. All the knowledge they have build-up with virtualization over the years can be a significant competitive advantage and benefit the newer high-performance workloads driven by data.

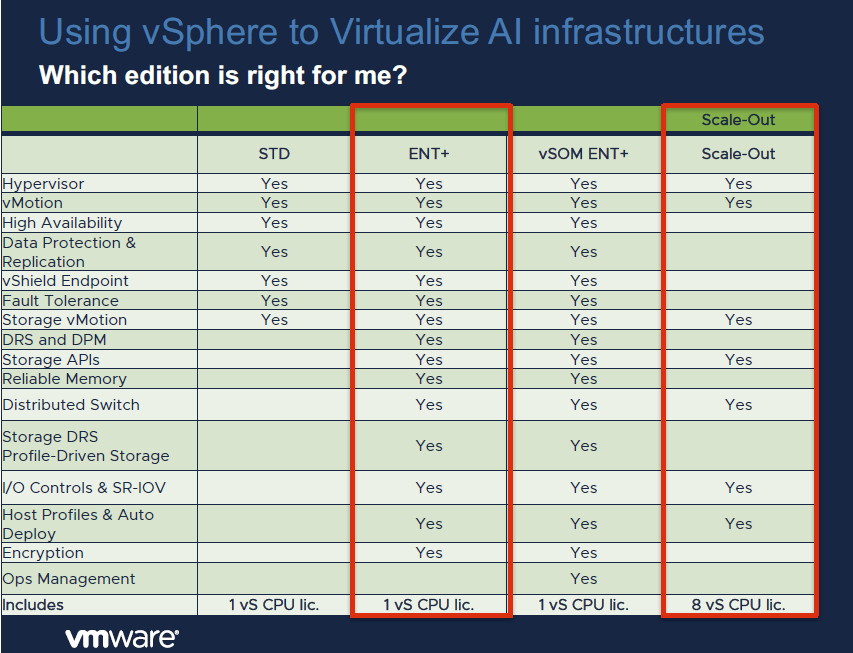

VMware introduced, about a year ago, a new addition to their vSphere product line specifically for the HPC and Big Data market that is called “vSphere Scale-Out”. It is a version of vSphere that contains all the essential core features aimed at HPC and Big Data workloads. According to VMware, it will be licensed exclusively for HPC and Big Data. Additionally, expect a reduced price compared to the vSphere Enterprise edition. Some of the key features included in the vSphere Scale-Out edition are the ESXi Hypervisor, vMotion, Storage vMotion, Host Profiles, Auto Deploy, and Distributed Switch. For a comparison of the various vSphere editions see Figure 1 below from VMware.

AI

The use of Artificial Intelligence with accelerated hardware such as GPUs and FPGAs are becoming mainstream. This is due to the complexity of the algorithms requiring a higher level of parallelization and the vast amounts of data to be processed. The use of GPUs is nothing new to VMware as they had Virtual Desktop Infrastructure (VDI) solutions for a long time now. They have been building on this expertise and expanded with using GPUs as workhorses for AI. Those GPUs are sometimes also called General Purpose GPUs (GPGPUs) to avoid confusion with the GPUs used for displays

VMware has been adding new features around HPC, Big Data and AI. Mostly to facilitate the use of hardware accelerators such as GPUs. Earlier this year VMware released vSphere 6.7 and the upcoming vSphere 6.7 Update 1 are important releases related to the use of hardware accelerators. There are many new features and improvements and some of them are listed below. For a full list of features please refer to the VMware website.

vSphere 6.7:

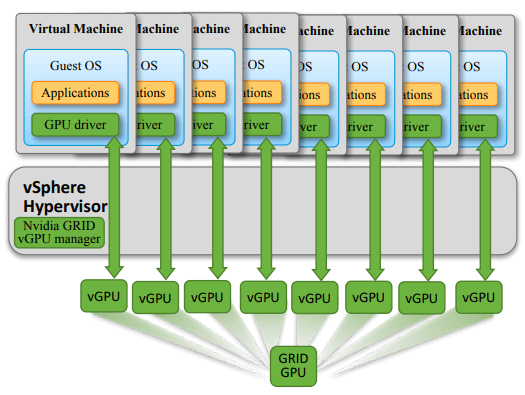

- Using and enhancing NVIDIA Grid vGPU technology.

- This technology allows you to create one or more virtual GPU instances (vGPU) on a single physical GPU. Consequently, enabling the ability to run a different GPU workload on each vGPU instance and attach those instances to VMs. Each vGPU is assigned a profile that defines the memory size per vGPU and the maximum number of vGPUs per physical GPU. Each VM has a GPU driver and is unaware that the attached vGPU is virtual.

- Figure 2 is showing a single physical GPU accelerator with eight (8) vGPU instances. Each instance is attached to a single VM. Attaching more than one vGPU to a VM is currently not supported.

- Pause & Resume functionality for VMs that take advantage of GPU workloads. A feature that been available for CPUs and now available for GPUs.

- Using and enhancing NVIDIA Grid vGPU technology.

- While another key feature is support for Remote Direct Memory Access (RDMA) for high-performance workloads that require maximum bandwidth with the lowest latency. It allows for direct memory transfers from one computer to another with minimal involvement from the CPU.

- Additionally, the ability to Slice & Dice a physical GPU into one or more vGPUs added with the Pause & Resume capabilities is making vSphere a very competitive and attractive solution. It does increase the overall usage and efficiency of GPUs. Moreover, it improves the ROI which is quite important considering the cost of GPUs.

vSphere 6.7 Update 1:

- The release was announced at VMworld 2018 (US) and will be released at the end of the quarter (VMware quarter ends on 11/02/2018).

- vMotion for GPUs

- On top of the Pause & Resume functionality, the new release adds vMotion for NVIDIA vGPU powered VMs.

- An impressive feature that was on many customer’s Wishlist.

- There are the expected limitations such as only being able to vMotion between vGPUs of the same type and GPU technology.

- It is delivering the management benefits that have been available for a long time on vSphere to GPU workloads.

- Support or Intel FPGA

- The release also comes with support for the Intel Programmable Acceleration Card with Intel Arria 10 GX FPGA.

- Near bare metal performance as the card is accessed through the VMware DirectPath I/O technology.

Roadmap:

During one of the sessions at VMworld, it was announced that a Distributed Resource Scheduler (DRS) was on the roadmap for NVIDIA vGPU and for DirectPath I/O GPU. DRS is responsible for balancing computing workloads with available resources in a virtualized environment This should benefit the ease of consumption and provisioning of GPUs. No timeline was given for a release date yet.

Sessions

Before leaving for VMworld I made a list of the sessions that I wanted to attend. Sessions with topics around HPC, Big Data and AI. Although I couldn’t go to all the sessions I wasn’t disappointed with the content of the sessions I attended. Thankfully, VMware recorded all the sessions from my list and they are available on the VMworld On-Demand Video Library website for replay.

There was a good mix of HPC, Big Data and AI related sessions for beginners, intermediate and experts. Some of the sessions focused on vSphere while others focused on accelerator hardware such as GPUs, FPGAs and interconnect accelerators including RDMA. The content of some sessions overlapped but I do recommend viewing all listed sessions.

For each session, I have listed an image with:

- title

- session ID

- presenter(s)

- direct link to the On-Demand Video Library

- description of the session next to the image

Additionally, clicking on an image will bring you directly to the recorded session.

NOTE: for the links to work you will need to pre-register with VMware first here.

Description:

A good introduction with definitions for many of the terms used in Machine Learning. Followed by a TensorFlow demo using the Titanic passenger list for analysis.

Description:

Describes the new demands on IT infrastructure and the need for GPU accelerated applications in the enterprise. Followed by a presentation by the CEO of Bitfusion explaining their disaggregated platform for GPUs and FPGAs.

Description:

A good overview of high-performance workloads and how to obtain maximum performance. Josh Simons talks about breaking or bending the virtual abstraction for the ultimate best performance. The presenters take you through the various components of high-performance workloads on vSphere. In conclusion, the presenters talk about benchmarking concepts.

Description:

This session answers the question “how do I run high-performance workloads on VMware Cloud on AWS?”. They also discuss the differences between VMware on-premises and VMware Cloud on AWS. All things considered, it is an important aspect of enabling application mobility.

Description:

The presenters are explaining why vSphere is the optimal platform for high-performance computing. They are going through the traditional HPC architectures and why to virtualize HPC.

Description:

Excellent session for people who are familiar with VMware but are new to HPC/Big Data/AI or had very little exposure to it. Explained in a way that doesn’t require a heavy technical background to grasp the concept.